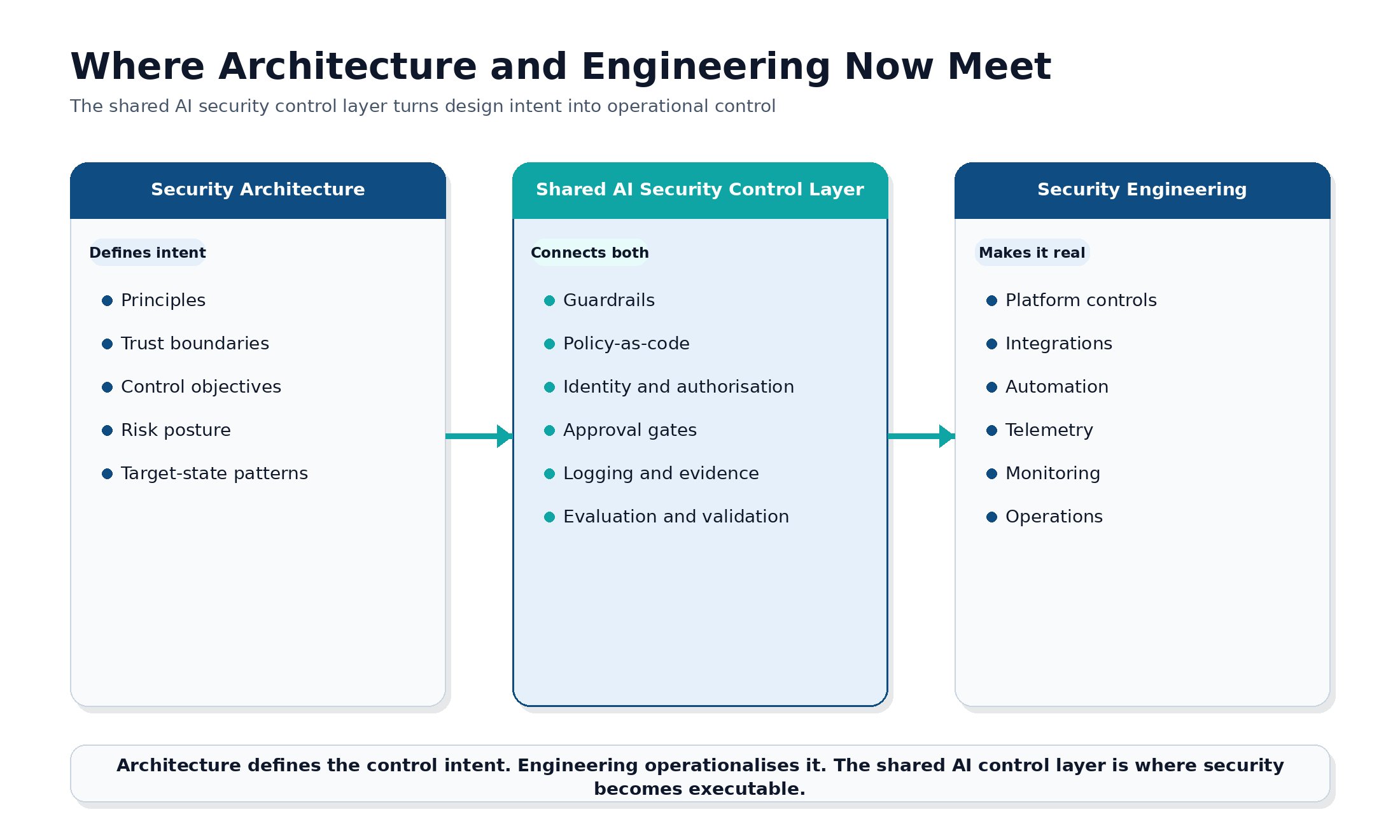

For years, many organisations treated security architecture and security engineering as separate, if closely related, disciplines. Security architecture sets the direction of principles, trust boundaries, target states, and control objectives. Security engineering turned that intent into tooling, integrations, automation, and operational controls. AI is shrinking that gap.

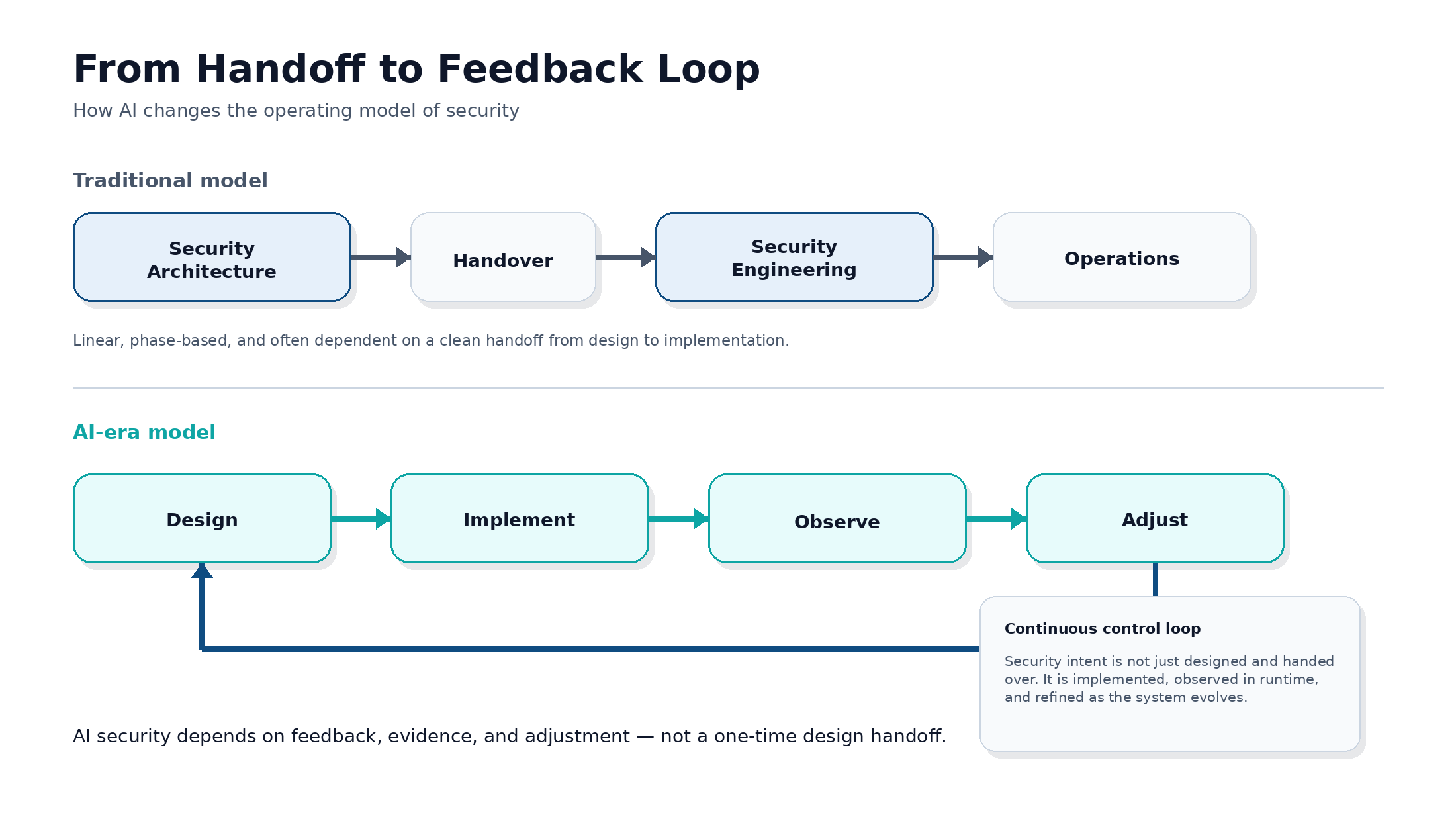

As organisations adopt large language models, retrieval pipelines, AI agents, and model platforms, the old handoff model starts to break down. Prompts, data, context, orchestration logic, and runtime behaviour shape AI systems. They do not behave like purely static, deterministic applications. In that environment, security can no longer be designed once and implemented later. It has to be designed, enforced, observed, and adjusted continuously. That is the shift from design to runtime — and it is bringing security architecture and security engineering closer together. The shift is simple but important: AI security works less like a linear handoff and more like a continuous control loop.

The old split no longer works as well

In traditional environments, the division was clearer. Architects defined what good looked like. Engineers built and ran the controls. The sequence was often straightforward: design first, implementation second. That model still has value, but AI exposes its limits. A principle such as “prevent sensitive data leakage” sounds reasonable at design time, but in an AI system, it becomes meaningful only when translated into concrete control points: prompt filtering, retrieval controls, tool permissions, output validation, session handling, logging, and escalation paths. If those mechanisms are not engineered properly, the architecture remains an aspiration. In other words, AI is forcing security teams to care less about the handoff and more about the feedback loop.

Why AI changes the relationship

First, AI systems behave differently from conventional applications. Traditional systems are usually built around predictable logic. AI systems are probabilistic. The same model can respond differently depending on how a request is framed, what data is retrieved, what memory is retained, or what tools the system is allowed to call. That changes the security question. It is no longer enough to ask whether the design is sound. We also have to ask how the system behaves under real operating conditions, whether the controls hold up, and what evidence exists when they do not hold up. Second, AI introduces new control points that sit between design and implementation. Teams now need to consider prompt injection, model misuse, retrieval poisoning, unsafe outputs, excessive agent autonomy, and data exposure via inference or memory. These are not purely architectural issues, nor purely engineering tasks. They sit at the intersection of both. Third, governance now depends on technical enforcement. Many organisations are creating AI governance frameworks covering privacy, security, compliance, model risk, and responsible AI. That is necessary, but governance only works when it connects to real mechanisms. It is not enough to say that approved models must be inventoried, prompts must be versioned, or risky outputs must be controlled. Those expectations need supporting controls in pipelines, platforms, and operations. That is the new overlap: governance and control intent must be translated into something executable.

Where architecture and engineering now meet

The clearest way to describe the intersection is that AI makes security design executable. That intersection increasingly includes policy-as-code, guardrails, gateway enforcement, identity controls for models and agents, approval gates for high-risk actions, structured logging, evaluation pipelines, and telemetry that can prove whether a control is working. Take an AI agent as an example. An architect may decide that the agent should access only approved tools, process only approved data classes, and require human approval before taking a sensitive action. Those are architectural decisions. But they only become real when engineering implements tool-level authorisation, data handling rules, approval workflows, and monitoring. The control exists only when both disciplines meet. This is a major shift. In AI systems, architecture is no longer just a target-state diagram, and engineering is no longer just downstream implementation. Both are part of a shared control system. This is the practical intersection: architecture defines the intent, engineering makes it real, and the shared control layer connects the two.

Threat modelling is now part of design

AI also changes the role of threat modelling. Historically, threat modelling was sometimes treated as a review exercise that happened alongside design. In AI systems, it needs to shape the design itself. A few examples make the point. Prompt injection affects how tools are exposed and what the model is trusted to do. Retrieval-augmented generation, or RAG, poisoning affects data ingestion, source validation, and trust scoring. Sensitive data leakage affects memory design, redaction patterns, and output controls. Agent misuse affects approval flows, separation of duties, and action boundaries. These threats are not side notes. They influence component choices, process design, and engineering priorities from the start. That makes threat modelling a shared responsibility across architecture and engineering, not a checkpoint owned by one side.

Runtime evidence matters as much as design intent

One of the most important lessons in AI security is that a good diagram does not prove a system is secure. A team may present a strong-looking design with identity integration, an API gateway, a guardrail layer, a vector store, and centralised logging. But the real questions come later. Are unsafe prompts being detected consistently? Are policy violations being logged in a usable way? Are tool calls actually restricted? Can the organisation trace which prompt, model, policy, or knowledge source influenced an outcome? Can it roll back safely when a model or policy update creates unexpected risk? These questions require runtime evidence. This is a better operating model for AI than the older pattern of design followed by handoff. That is why AI security increasingly depends on a continuous cycle:

Design → Implement → Observe → Adjust

What this means for organisations

For security leaders, the implications are practical. Security architects need a deeper working knowledge of how AI platforms, orchestration layers, guardrails, and telemetry actually operate. They do not need to become platform engineers, but they do need to understand the mechanics well enough to define realistic, enforceable controls. Security engineers, in turn, need a stronger voice in design. In AI environments, implementation choices can materially change risk posture, governance capability, and the quality of assurance. The organisations that do this well will define reusable, secure AI patterns instead of relying on project-by-project improvisation. They will govern models, prompts, and policies as controlled assets. They will embed evaluation and control validation into delivery pipelines. And they will treat observability as part of the architecture itself, not just an operational add-on.

The bigger shift

What AI is really changing is not just the tooling. It is changing the security operating model. Architecture still matters because organisations need principles, trust models, and control objectives. Engineering still matters because nothing is secure until it is implemented, monitored, and maintained. But AI reduces the space between the two. That is not a weakness. It is a sign of a more mature model. The organisations that adapt early will be better placed to build AI systems that are not only innovative but also governable, defensible, and trustworthy.

Closing thought

In the AI era, the strength of a security function will be measured less by the quality of its diagrams alone and more by how well it turns security intent into living, observable, enforceable systems. That is where security architecture and security engineering now meet. AI is the reason that meeting points matter more than ever.